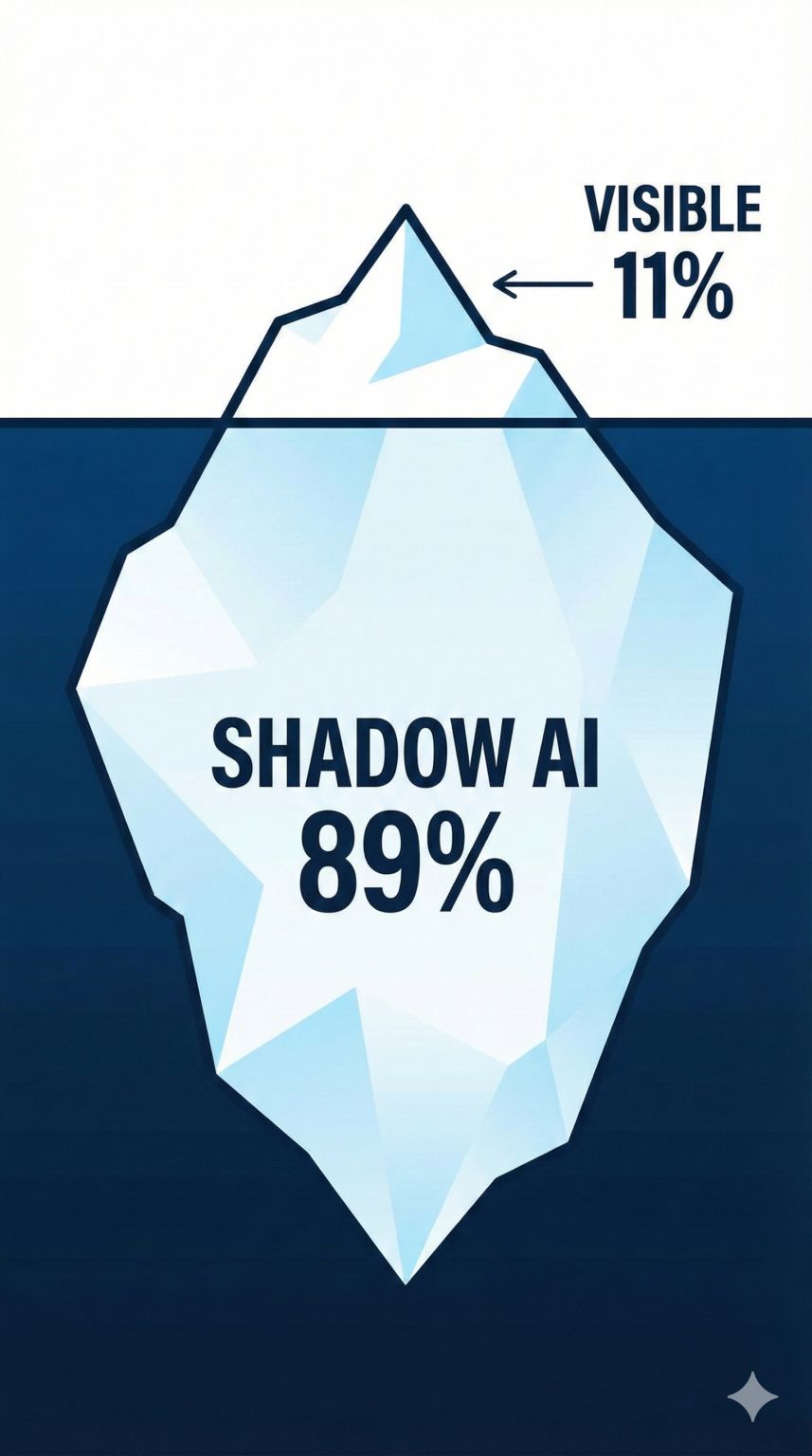

TL;DR: Shadow AI is unauthorized AI tool usage in enterprise teams that exists because organizations try to ban rather than govern AI adoption. Engineering leaders should create safe adoption frameworks, governance structures, and explicit permission models instead of driving adoption underground.

99% of people treat ChatGPT like Google. That’s why their results are average. Here is the 4-step framework I use to automate complex workflows (No code required). The Hard Truth: If the AI output is bad, it is a skill issue. “The problem is me.” LLMs are not magic knowing-machines. They are prediction engines. If your input is vague, the AI is mathematically forced to hallucinate to fill the gaps. To fix this, stop “asking questions” and start “programming with words.” Here is the architecture to go from Zero to Hero: Phase 1: The Setup (Hack the Probability) You must narrow the search space before you ask for the output. • Persona: Don’t just ask for code. Say, “Act as a Senior Site Reliability Engineer.” • Context: Never assume knowledge. Treat the AI like a brilliant intern who started 5 minutes ago. • Permission to Fail: This is critical. Explicitly tell the AI: “If you don’t know the answer, state that you don’t know.” This single sentence kills hallucinations. Phase 2: The Logic (Chain of Thought) Most people ask for the result immediately. This causes errors. Instead, force the AI to “Show its work.” • The Prompt: “Think step-by-step before answering.” • The Result: The AI reasons through the logic before generating the final output, increasing accuracy massively. Phase 3: The Refinement (Battle of the Bots) If you need a bulletproof result, use the “Playoff Method.” Assign 3 distinct personas (e.g., An Engineer, A PR Manager, An Angry Customer). Ask them to critique each other’s drafts before merging them into a final answer. Phase 4: The Meta Skill You cannot prompt clearly if you cannot think clearly. If you are struggling with a prompt, close the tab. Open a notebook. Write down exactly what you want the system to do. Think first. Prompt second. P.S. Which of these techniques do you use the most? The “Permission to fail” rule changed my workflow entirely.

Frequently Asked Questions

What is shadow AI?

Shadow AI refers to employees using AI tools like ChatGPT, Claude, or Copilot without explicit organizational approval. It exists because companies restrict AI access rather than establishing governance frameworks, pushing adoption into the shadows instead of managing it safely.

How can you detect shadow AI in your organization?

Monitor API usage through your network, survey developers anonymously about tools they use, check browser history patterns, and track suspicious increases in productivity without corresponding code changes. Many teams also implement AI-aware code analysis that flags suspiciously consistent code patterns generated by AI.

Should companies ban ChatGPT at work?

No. Banning drives adoption underground and increases security risk. Instead, companies should establish clear policies defining which use cases are approved, what data cannot be used, and what governance checkpoints exist. Safe adoption beats prohibition every time.

How can you create an AI usage policy that works?

Define three tiers: approved tools with data guardrails, restricted contexts (no customer data, no credentials), and explicit escalation for novel use cases. Train teams on what to do with AI output, not just which tools to use. Make the policy enable productivity while managing risk—not shut down innovation.