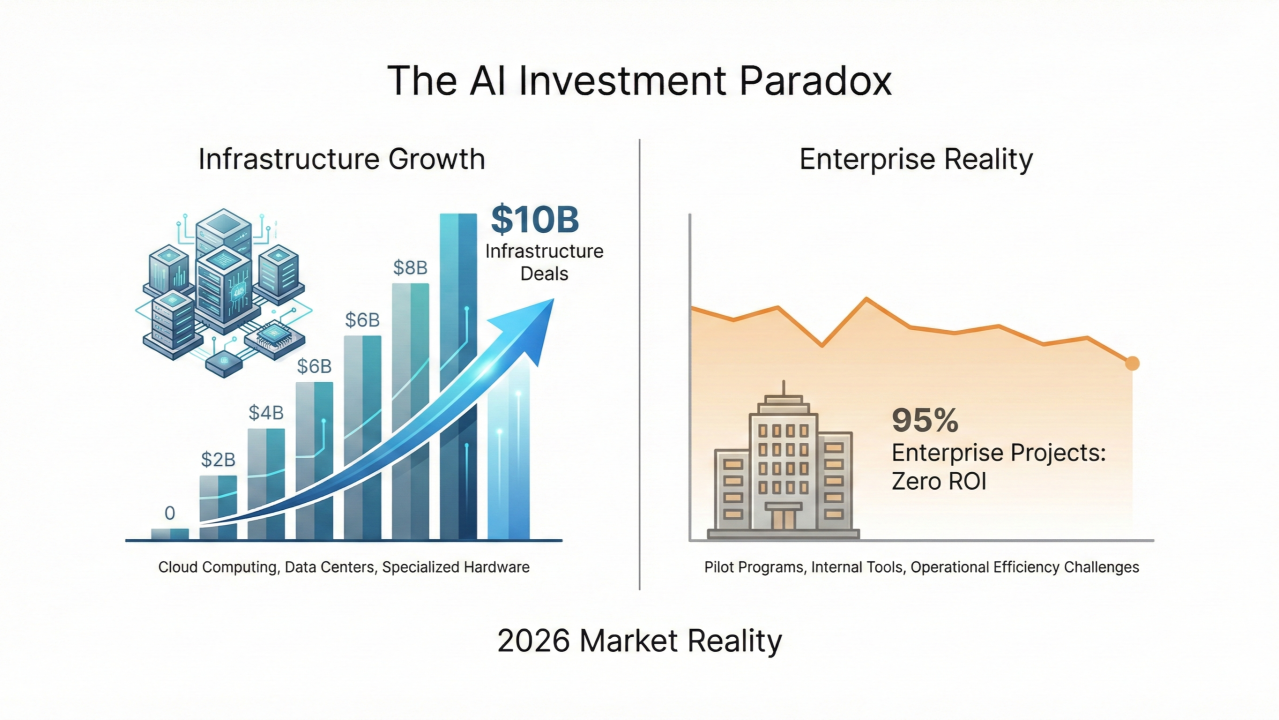

95% of companies are getting zero return on their AI investments.

Let that sink in. Despite $37 billion spent on GenAI in 2025 alone, MIT’s “GenAI Divide” report shows the vast majority of organizations have nothing to show for it—no measurable ROI, no impact on P&L, just expensive pilots gathering dust.

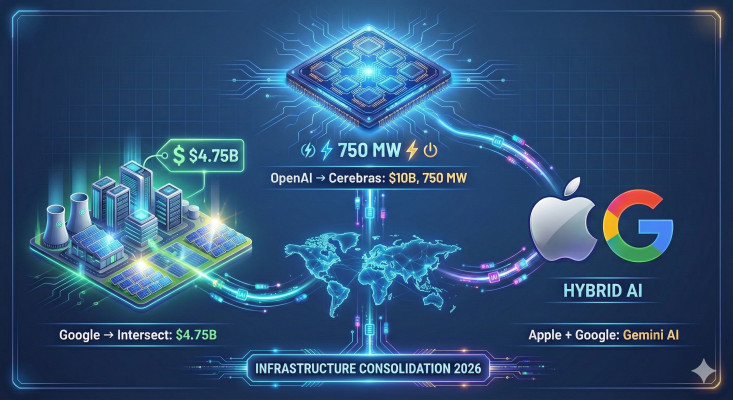

But here’s the paradox: This week, OpenAI signed a $10 billion deal with Cerebras for AI compute. Apple chose Google Gemini over OpenAI to power Siri. Infrastructure spending is hitting records while enterprise execution collapses.

So what’s really happening? AI capability is exploding—but enterprise execution is failing at scale.

The Infrastructure Layer Is Thriving

The compute layer is consolidating fast—but it’s not just about chips anymore:

OpenAI & Cerebras: $10B multi-year deal for 750 MW of compute power focused on inference, not training. This isn’t about building bigger models, it’s about serving 900 million weekly users faster and cheaper. OpenAI is diversifying away from NVIDIA’s 98% data center dominance, betting on Cerebras’ Wafer Scale Engine for 15x faster inference. But here’s what most people miss: 750 MW isn’t about speed, it’s about availability. Energy is becoming the new bottleneck. As one industry podcast put it: “The constraint isn’t model parameters anymore. It’s energy cost and energy access.”

Google & Power Grids: While everyone watched chip deals, Google quietly spent $4.75 billion acquiring Intersect Power, a data center energy infrastructure company. By 2028, Intersect will deliver 10.8 GW of capacity (20x the Hoover Dam). First project: 640 MW solar + 1.3 GWh battery storage in Texas, online late spring 2026. Google’s strategy: own the full stack from power generation to inference. Tech companies that were “software-first” now control nuclear reactors and solar farms.

Apple & Google: In what may be the deal of the decade, Apple integrated Google Gemini into Apple Intelligence. Translation: even Apple admits it can’t win the foundation model race alone. The strategy is hybrid: small models on-device for privacy, Gemini’s cloud power for complex reasoning. Privacy remains paramount via Private Cloud Compute architecture.

Hardware economics shifting: Gartner forecasts $2.52 trillion in global AI spending for 2026 (+44% YoY). But here’s the kicker: 54% goes to “iron and silicon”: servers, data centers, chips, and now power infrastructure. Software, the layer that creates actual business value, gets the scraps.

The infrastructure bet is clear. The execution bet? Not so much.

The Enterprise Layer Is Collapsing

While Big Tech builds, enterprises burn cash:

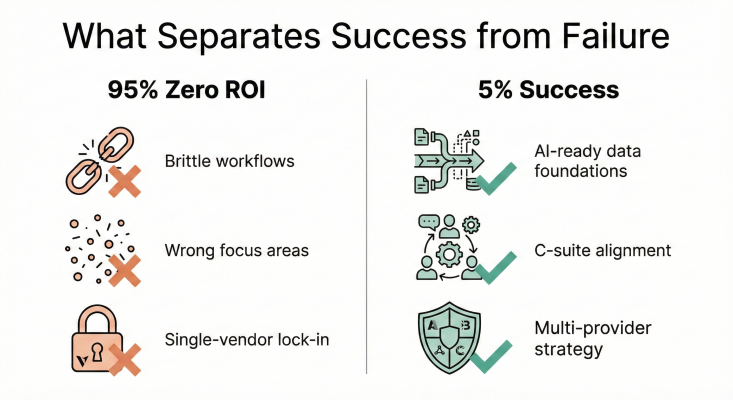

95% zero ROI: MIT’s report isn’t an outlier. Forbes, Baytech Consulting, and multiple 2026 analyses confirm it. The failure patterns are consistent: brittle workflows, lack of institutional memory in models, bad “build vs. buy” decisions (67% of “build” attempts fail), and wrong focus areas (too much on sales/marketing, not enough on back office operations where automation delivers immediate savings).

The 5% vs. 95% split: What separates winners from failures isn’t technology—it’s infrastructure maturity, governance, and team structure. The 5% who succeed have AI-ready data foundations, strong data quality controls, cross-functional teams with C-suite alignment, and a ruthless focus on measurable business outcomes.

The efficiency illusion: Baytech’s analysis of the METR 2025 study reveals a sobering truth—19% net slowdown in complex coding tasks when using AI. Jakob Nielsen predicts the dominant metric for 2026 will shift from “tokens generated” to “tasks completed autonomously.” In other words: stop counting AI activity, start counting AI results.

Big Tech Is Hedging—And So Should You

Three strategic signals this week matter:

Multi-provider is the new default: Apple didn’t pick “the best” model, it picked a portfolio strategy. Microsoft hedges with Anthropic. OpenAI hedges with Cerebras. Your single-vendor assumption just became expensive.

From models to systems: Satya Nadella’s January blog post marks a strategic pivot. Microsoft is done selling “magic models.” The new doctrine: AI as integrated systems with memory, context, and process scaffolding, not chatbot replacements. Nadella’s warning about “AI slop” (low-quality generated content) signals the market’s fatigue with hype over substance.

Agentic AI enters production: Google launched Universal Commerce Protocol, enabling AI agents to research, compare, and purchase end-to-end. Anthropic released Cowork for desktop task automation. Gartner predicts 40% of enterprise apps will have task-specific agents by end-2026 (up from 5% today). The era of “pilot purgatory” is ending—agents are going production.

The Reality Check

The opportunity is real. Cognizant claims AI could unlock trillions in productivity. Accenture reports 86% of C-suite plans to increase AI spend. But 2026 is the year the music stops.

CFOs are done writing blank checks. As Domino Data Lab’s Jarrod Vawdrey put it: “2026 is the year when the music stops. CFOs are done writing blank checks for ‘AI innovation’ that can’t be tied to actual business results.”

Your Board will ask this quarter: “What’s our AI ROI?”

Are you in the 5% with an answer, or the 95% still running pilots?

Last two weeks in Enterprise AI

#1: The Chip Wars Reshape Economics

NVIDIA’s market dominance (98% of data center AI) faces its first real challenge. OpenAI’s $10B Cerebras deal proves alternatives exist for inference workloads—where 15x speed improvements matter more than raw training power. Meanwhile, China’s Knowledge Atlas released the first multimodal model trained entirely on domestic Huawei chips, signaling supply chain diversification is accelerating globally.

#2: Agentic AI Goes Mainstream

This isn’t hype anymore. Google’s Universal Commerce Protocol enables AI agents to handle full purchase workflows autonomously. Shopify deployed six industry-specific AI agents (via Aible) on NVIDIA hardware, pre-trained on KPIs to detect and explain customer acquisition changes at scale. Anthropic’s Cowork gives agents scoped folder access and parallel task execution for desktop workflows.

Gartner’s prediction: enterprise apps with agents jump from 5% to 40% by end-2026. The 26-34% ROI from current GenAI scenarios (per TechTarget) will come from automation, not more chatbots.

#3: Healthcare AI Hits Production Scale

NVIDIA and Eli Lilly announced a $1B AI-powered drug discovery lab partnership. OpenAI launched HIPAA-compliant tools for clinical, research, and admin tasks, acquiring startup Torch for unified medical data handling. Regulated industries are moving from pilots to production—finally.

Why it matters: If healthcare (the most regulated industry) is scaling AI, your compliance objections just got weaker.

#4: Meta Pivots From Metaverse to “Physical AI”

Meta cut over 1,000 jobs (10% of Reality Labs staff) to shift resources from VR/metaverse to AI wearables. The Ray-Ban Meta smart glasses are a hit; VR goggles are not. Zuckerberg’s new bet: “Physical AI” - assistants that see and hear the real world, not virtual ones. This is multimodal AI in action.

Signal: When Big Tech kills billion-dollar bets to double down on AI, pay attention.

#5: The Reports That Changed The Narrative

-MIT’s “GenAI Divide”: 95% zero ROI from $37B spent in 2025. Multiple 2026 sources (Forbes, Baytech, Articsledge) corroborate. The methodology focuses on large, transformational projects, not individual productivity gains (where Wharton finds 74% positive ROI when including “Shadow AI” and Copilot usage).

-Conflicting data: IT professionals report 67% positive ROI (per Atomicwork) because IT problems are well-defined, data is structured, and metrics are precise (e.g., MTTR for password resets). The lesson: ROI varies wildly by use case. Back office wins, vague “innovation” fails.

#6: Security and Governance Tighten

30% executive satisfaction with AI ROI (per CIO.com) is driving a governance shift. 71% use GenAI, but only 55% of C-suite feels prepared for disruption. The focus is moving to data quality, SSO/MFA enforcement, zero-trust models, and compliance frameworks. As adoption scales, so do risks, and boards are waking up.

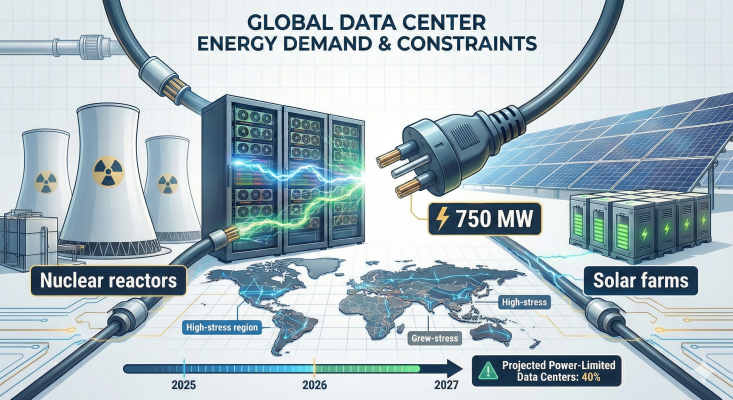

#7: Energy: The 2027 Bottleneck Nobody’s Talking About

While everyone obsesses over model parameters, the real constraint is emerging:power supply.

The numbers are brutal:By 2027, 40% of AI data centers will be operationally limited by power availability, not compute capacity. PJM Interconnection (serving 65 million Americans) will be 6 GW short of reliability requirements. U.S. data center electricity consumption jumped from 183 TWh (2024) to a projected 426 TWh by 2030 - a 133% increase in six years.

Big Tech’s response: They’re not waiting for utilities. Google acquired Intersect Power for $4.75B to build “energy parks”: co-located solar, batteries, and data centers delivering 10.8 GW by 2028. Microsoft restarted Three Mile Island (835 MW dedicated to AI, commercial mid-2027). Amazon invested $20B in a nuclear-powered data center campus.

The strategic shift: Companies that were “software-first” now own the full stack, from nuclear reactors to GPUs to API endpoints.

For CTOs:Your 2026 AI roadmap needs apower budget, not just a compute budget. Questions to ask:

What’s your watts-per-token cost?

Which vendors have secured energy access?

Are you planning inference capacity without checking if the grid can support it?

The insight: OpenAI’s 750 MW Cerebras deal isn’t just about inference speed, it’s about energy availability. When power becomes the bottleneck, securing it becomes competitive advantage.

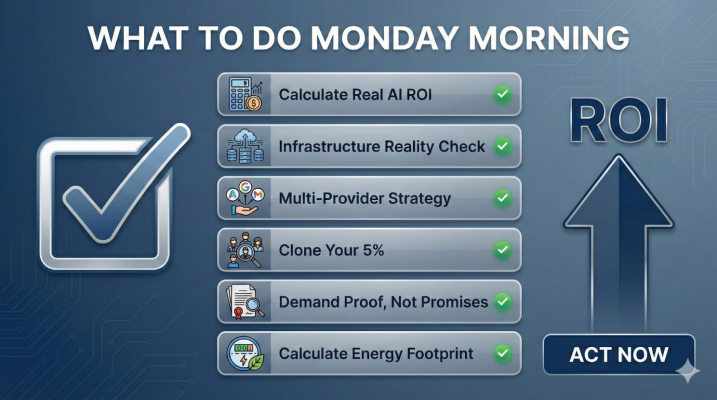

What To Do Monday Morning

1. Calculate Your Real AI ROI

Not “potential.” Not “pilot learnings.” Hard numbers: Total AI spend (licenses + infrastructure + FTE time) vs. measurable value delivered (time saved × hourly rate, or revenue/cost impact).

If you can’t answer in 60 seconds, you’re in the 95%.

2. Infrastructure Reality Check

The 5% ROI winners have:

AI-ready data foundations (unified architectures, not data silos)

Strong data quality controls (garbage in = garbage out)

Cross-functional teams (CTO + CFO + CHRO alignment, not IT silo)

Do you? If not, stop buying more AI tools and fix your data layer first.

3. Build Your Multi-Provider Strategy

Apple chose Google over OpenAI. Microsoft hedges with Anthropic. OpenAI hedges with Cerebras. Your single-vendor lock-in just became a liability.

Action: Audit your vendor dependencies. Where are you exposed? Where can you diversify? The “best model” changes every 90 days, but your architecture shouldn’t.

4. Clone Your 5%

Find the teams actually getting AI ROI in your org. What do they have that others don’t?

Hint from research: It’s not fancier models. It’s cleaner data, clearer use cases, and tighter feedback loops. Clone their approach before building another “AI Center of Excellence.”

5. Demand Proof, Not Promises

2026 is the year vendors must show ROI, not roadmaps. As Jan Van Hoecke (iManage) warns: “2026 is the year when agentic AI will get a reality check, as the gap between marketing promises made in 2025 and their actual competencies will become starkly visible.”

Stop accepting “transformational potential” as an answer.

6. Calculate Your Energy Footprint

New metric for 2026:watts per million tokens. OpenAI secured 750 MW. Google acquired $4.75B in power infrastructure. Microsoft is restarting nuclear reactors.

Action: Audit your inference costs. What’s your energy overhead? Which of your vendors has secured long-term power access? Are you building a 2027 roadmap assuming unlimited grid capacity?

Big Tech is vertically integrating from power plants to APIs. If energy becomes the bottleneck (and 40% of data centers will be power-limited by 2027), who gets priority?

The companies that own the power supply.