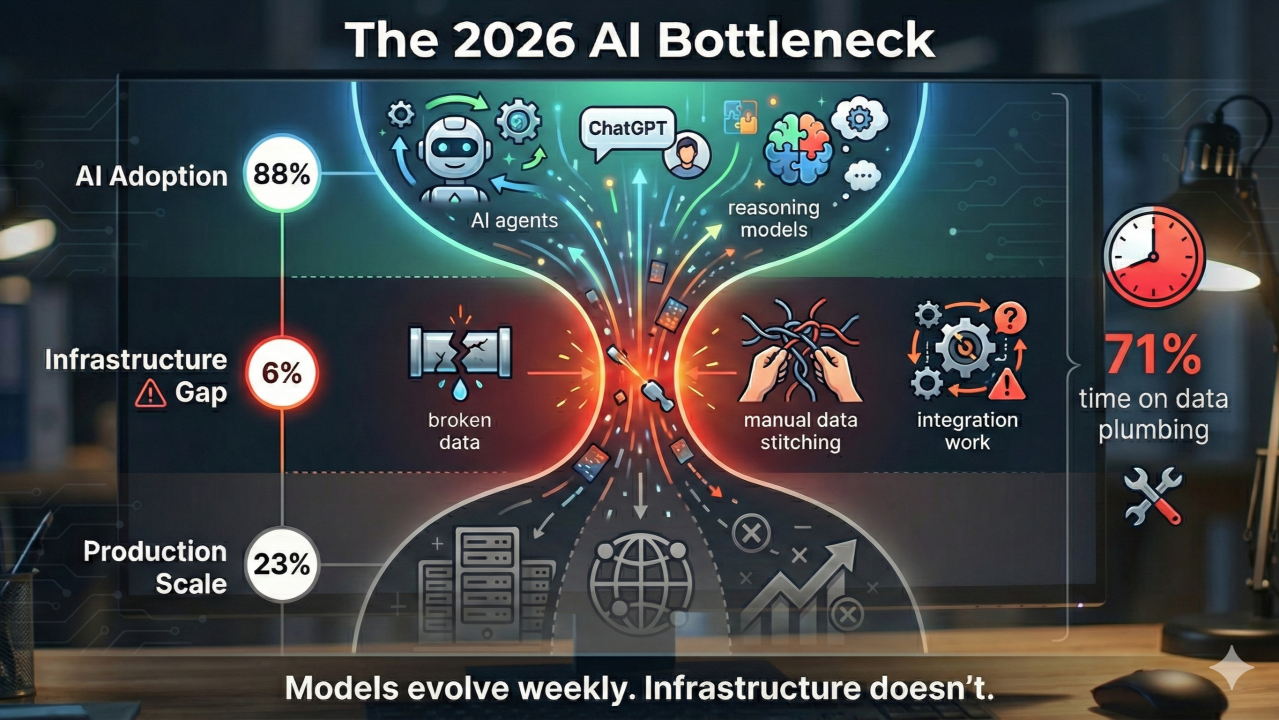

TL;DR: 88% of companies deployed AI, but only 6% have infrastructure ready. The real gap: 71% of time spent on manual data integration. Frontier workers (top 5%) are 6-17x more productive due to infrastructure, not talent. Budget shifted 76% toward buying platforms instead of building.

88% of companies adopted AI. Only 6% are ready for it.

Major reports dropped this week: McKinsey says nearly everyone’s using AI, CData says almost nobody has the infrastructure to support it.

Here’s what’s really happening: your teams are deploying AI agents, experimenting with reasoning models, scaling ChatGPT across departments. Meanwhile, they’re spending 71% of their time on “data plumbing” - manually stitching together data sources, building custom integrations, fixing broken pipelines.

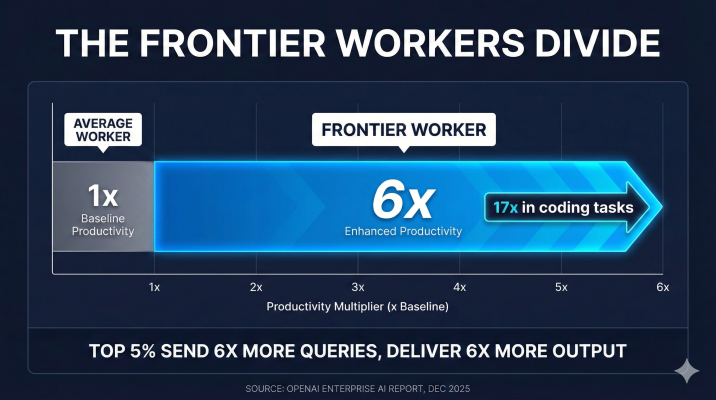

The paradox gets worse. OpenAI’s latest enterprise report shows a new class system emerging inside organizations. They call them “Frontier Workers” - the top 5% who’ve figured out how to actually use AI. These people are 6 times more productive than their peers (up to 17x for coding).

Think about that: same company, same tools, same access. One developer ships 17 times more code than another because they’ve mastered the tooling. This isn’t a skills gap. It’s an infrastructure gap masquerading as a training problem.

The data tells the story: McKinsey found 88% of organizations deployed AI in 2025, 62% are experimenting with agents and only 23% managed to scale agents to production. CData’s infrastructure report explains why - just 6% have real-time data systems that AI actually needs.

Your teams are burning hours on integration work that shouldn’t exist. According to CData, a typical AI use case requires pulling data from 6+ sources - without proper infrastructure, that’s manual work. Every. Single. Time.

Meanwhile, Menlo Ventures reports companies spent $37 billion on generative AI in 2025 - a 3.2x jump year-over-year. But here’s the shift: the buy-versus-build ratio flipped from 47% to 76%. Companies realized building AI infrastructure from scratch is a losing game.

The Infrastructure Bottleneck

The infrastructure bottleneck is your real challenge in 2026. Not model selection, not prompt engineering, not even AI governance, but whether your data layer can feed AI systems without human intervention.

Models are getting better every week, so should your infrastructure.

Sources:McKinsey: The state of AI in 2025,OpenAI: The state of enterprise AI,CData: Only 6% of AI Leaders Believe Their Data Infrastructure Is Ready,Menlo Ventures: State of Generative AI in the Enterprise

This Week’s AI Developments

1. The AI Reasoning War: Week of Breakthroughs

It’s the fastest innovation cycle AI has ever seen.

In seven days, we got Google Gemini 3 Deep Think hitting 45.1% on ARC-AGI, OpenAI shipping GPT-5.2 with 100% AIME performance, and the o3 model with deliberative alignment. Anthropic’s Claude Opus 4.5 is crushing SWE-bench at 80.9%.

The opportunity: breakthrough capabilities dropping every 7 days. Multi-step reasoning that actually works. Agents that can debug their own code. Models that explain their safety decisions.

The risk: if you’re not pinning API versions during this acceleration phase, your production systems will break. Hard.

Strategy for 2026: go multi-model. Use Gemini for research tasks, GPT for mathematical reasoning, Claude for code generation. The competitive war means no single vendor has the edge everywhere.

Sources:Google: Gemini 3 Deep Think,OpenAI: GPT-5.2 launch

2. Security Paradigm Shift: “Unsolvable” Prompt Injection

UK’s National Cyber Security Centre dropped a bomb on December 9: prompt injection “may never be fully fixed.” The guardrails approach - trying to filter malicious inputs at the LLM level - doesn’t work, and adversaries will always find new attack vectors.

The architectural pivot: air-gapping. AWS launched AI-Enhanced GuardDuty at re:Invent, using AI to monitor AI. OpenAI’s o3 introduced “deliberative alignment” - the model reasons about safety before acting. The EU opened an antitrust investigation into Google’s data scraping practices.

Action item for CTOs: zero agents get direct database write access. Every AI output goes through a validation layer. Treat agents as untrusted, always.

Sources:UK NCSC: Prompt injection may never be properly mitigated

3. Enterprise Partnership Explosion: The $17.7B Week

December 9 was the biggest enterprise AI deal day on record.

Accenture partnered with Anthropic to train 30,000 engineers on their “Reinvention Deployed Engineer” program. Microsoft committed $17.5 billion to Azure AI infrastructure in India - targeting 310 million workers. Snowflake extended their Anthropic partnership with $200 million, bringing “AI to data” instead of moving data to AI.

Anthropic hit $1 billion ARR with 40% enterprise market share. Claude Code launched in Slack, with Rakuten cutting software delivery cycles from 24 days to 5 days by eliminating context switching.

Slack is becoming an agentic hub: developers don’t want another tool to check, they want AI where they already work.

Sources:Accenture x Anthropic: Multi-Year Partnership,Microsoft: $17.5B India AI investment,Anthropic: Accenture partnership details

4. Sovereign AI and New Architectures

The architecture of AI infrastructure is shifting under our feet.

Mistral released Devstral 2 hitting 72.2% on SWE-bench with full on-premise deployment. AWS launched the Nova model family for vertical integration. Google shipped Deep Research Agent, generating autonomous 20-page research reports.

Three architectural trends: System 2 Reasoning requiring 60-120 seconds of async processing. Data Gravity replacing API calls - Snowflake’s model of bringing compute to data, not data to compute. Agentic Hubs like Slack replacing traditional SaaS workflows.

The 2026 question: does your infrastructure support these patterns, or are you stuck in 2024’s API-first world?

Sources:Mistral: Devstral 2 and Vibe CLI,AWS Nova announcement,Google Deep Research Agent

This week’s action items:

Infrastructure Audit- Are you in the 6% or the 94%? Audit real-time data capabilities across the 6+ sources your AI systems need. If your teams are doing manual data plumbing, you’re in the 94%.

Identify Frontier Workers- Find your 6x productivity outliers. They’ve cracked something the rest haven’t. Build a mentorship program to close the gap, or watch the productivity divide destroy team morale.

Multi-Model Budget Planning- Segment AI spending by use case. $200/month reasoning models for senior engineers solving complex problems. Commodity models for routine tasks. Implement role-based access control before costs spiral.

Security Architecture Review- Assume all agents are compromised. Build a validation layer between AI outputs and database writes. Air-gap critical systems. UK NCSC is right: prompt injection won’t be “solved.”

Data Over Models Investment- Shift budget from AI licenses to Data Ops. If 71% of time goes to data plumbing, that’s where the ROI lives. Real-time data infrastructure, semantic layers, API consolidation.

The infrastructure gap is the real story of 2025: models improve weekly, but your data layer stays the same.

Is your infrastructure ready, or are you part of the 94%?

Frequently Asked Questions

What percentage of companies are actually ready for AI?

McKinsey reports 88% of companies deployed AI in 2025. However, CData’s infrastructure analysis found only 6% believe their data infrastructure is ready. The remaining 82% spend 71% of their AI time on manual data plumbing—stitching together integrations that should be automated.

How much more productive are frontier workers?

OpenAI’s enterprise report identifies “Frontier Workers” (the top 5% who’ve mastered AI tooling) as 6 times more productive than peers overall. For software developers specifically, the productivity multiplier reaches 17x. This gap stems from infrastructure access and workflow optimization, not raw talent differences.

Why did companies shift from building to buying AI infrastructure?

Menlo Ventures reports the buy-versus-build ratio flipped from 47% buying in 2024 to 76% buying in 2025, representing $37 billion in spending. Companies realized building AI infrastructure from scratch delivers negative ROI compared to adopting mature platforms with real-time data integration.

How many data sources does typical AI work require?

CData found that typical AI use cases pull from 6+ data sources. Without real-time infrastructure, each integration requires manual setup. This creates the 71% time-on-plumbing problem cited by McKinsey, where teams spend more time integrating data than using AI.